How to use Event Storming and DDD for Evolutionary Architecture

Big Picture Event Storming and DDD let us share an architecture vision. Here is a way to realize it through evolutionary architecture and emergent design.

This is the 14th post in a series about how to use Event Storming to kick start architecture on good tracks. This post builds on the previous ones, so it might be a good idea to start reading from the beginning. This post will interest you if you are starting a new product or feature.

Let’s suppose we went through the workshops from the previous posts. We should have a good idea of where we would like to eventually be. Here is the tricky part. We don’t want to lose our time building this vision right from the start. We need something out of the door fast. We also want a sustainable pace, so we must avoid quick and dirty solutions. Remember the beginning of this series about Event Storming? I said that it complements evolutionary architecture and emergent design. Let’s see how in more details.

How can we build something incrementally, without sacrificing our target vision?

Principles

The good news is that we won’t need another intense workshop to get going, we already have everything we need.

We’ll need some skills and practices though.

Technical Debt Leverage

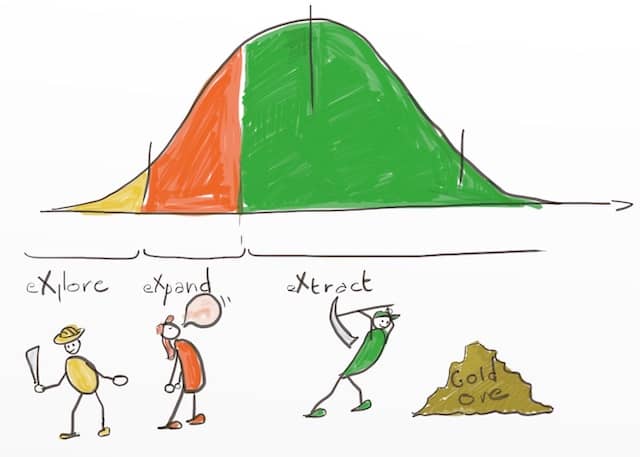

What we’ll need is smart technical debt management. Eric Dietrich call this Technical Leverage.

We need to get features out fast. The trick is to make things as simple as possible at the beginning while keeping the ability to refactor. We should not skip steps that are mandatory to let us refactor to the target vision later down the road. For example, suppose we know we’ll need a separate process someday. If we currently only have a single team and the NFRs are fine, then modularity is enough for now. We don’t want to skip modularity though, as it would make future refactoring too difficult.

💡 The trick is to make things as simple as possible at the beginning while keeping the ability to refactor.

Another obvious step we cannot skip is automated testing. Even better, I’d say ‘fast’ tests. It does not matter if they are unit, integration or end to end. What matters is that they are fast enough to enable a fast refactoring feedback loop.

Other famous authors also wrote about this principle:

- Dan North’s Spike and Stabilize technique contains more practical advices about how to do it.

Incremental Refactoring Techniques

Obviously, on this path, you’ll need to master incremental refactoring techniques. Without them, it will be very difficult to transform the system as you go. Martin Fowler’s Refactoring book is the perfect reference to learn these techniques.

If you can, start a coding dojo to practice and master these techniques.

A last advice is that whenever you need to refactor your code, do so in the direction of your vision. Let’s come back to our modularity example. We can make our modules have the same boundaries as our expected future services.

What’s the path then?

Remember the 2 scenarios from the previous workshop? We ended up with 2 functional architectures:

- That of the veteran startup

- That of the college dropout startup

The veteran architecture should be our end goal. Let’s use the college dropout architecture as our starting point. The 2 architectures follow the same boundaries, so it should be possible to migrate from one to the other.

Remember we constrained the DDD Domain relationships available to the college dropouts. This architecture will use customer supplier, ball of mud, and inner source relationships. This is the classic way by which monolith are born. While the team and the code are small, this monolith should remain manageable.

The Veteran-target architecture will contain services and Anti Corruption Layers. With tests and a modular monolith, we should be able to incrementally refactor to the vision. That’s the Event Storming, DDD, evolutionary architecture and emergent design synergy.

Don’t forget, the main point is to be able to deliver features early.

Tips

Here are some tips to get the most out of this practice

- Repeat. Nothing prevents us from running a new Event Storming from time to time. Every time we do it, it will be faster, as more and more knowledge is shared in the team. By repeating it, the target architecture will evolve with the domain knowledge.

- As you focus on a bounded context, you can also run finer grain, design level event storming sessions. This shorter workshop yields a more detailed target design for one bounded context.

- I found the

// TODO XXX comment ...technique great at taking technical debt leverage. You can read more about it here. Other interesting techniques are Architecture Decision Records and Living Documentation. By documenting past decisions, they help us to change the system later down the road.

💡 By documenting past decisions, Living Documentation let us change the system later down the road.

Summary

If you went through the Big Picture Event Storming, you have everything you need to get started today.

Are you lacking evolutionary architecture or emergent design skills? Start your coding dojo today to improve your refactoring skills.

Are you afraid that this strategy will lead to unsolvable NFR problems at the end? For example, who said that this target architecture is going to handle the load we’ll need? You are right, and that is what I am going to talk about in the next post.

This was the 14th post in a series about how to use Event Storming to kick start architecture on good tracks.

Leave a comment